架构环境说明

本文章将演示如何使用二进制方式部署 k8s 高可用集群,这里部署的 kubernetes 版本为 1.28.x。本次部署使用 11 台虚拟机,系统版本为 Ubuntu Server 24.04,分别为3台 etcd,3台 Master,5台 Node。集群相关配置如下

集群网段划分

- k8s 宿主机IP网段: 172.31.100.1/26

- k8s Service 网段: 10.96.0.0/16

- k8s Pod 网段: 192.168.0.0/16

高可用方式

高可用方式: Nginx,使用此方式做高可用时不需要 VIP

IP 地址信息

| 主机名 | IP地址 | 系统版本 | 角色 |

|---|---|---|---|

| k8smaster01 | 172.31.100.11 | Ubuntu Server 24.04 | master |

| k8smaster02 | 172.31.100.12 | Ubuntu Server 24.04 | master |

| k8smaster03 | 172.31.100.13 | Ubuntu Server 24.04 | master |

| k8snode01 | 172.31.100.14 | Ubuntu Server 24.04 | worker |

| k8snode02 | 172.31.100.15 | Ubuntu Server 24.04 | worker |

| k8snode03 | 172.31.100.16 | Ubuntu Server 24.04 | worker |

| k8snode04 | 172.31.100.17 | Ubuntu Server 24.04 | worker |

| k8snode05 | 172.31.100.18 | Ubuntu Server 24.04 | worker |

初始化配置

配置系统

所有节点配置 hosts

1

2

3

4

5

6

7

8

9

10

11cat >> /etc/hosts <<EOF

172.31.100.11 k8smaster01

172.31.100.12 k8smaster02

172.31.100.13 k8smaster03

172.31.100.14 k8snode01

172.31.100.15 k8snode02

172.31.100.16 k8snode03

172.31.100.17 k8snode04

172.31.100.18 k8snode05

EOF安装常用软件

1

sudo apt install -y vim bash-completion net-tools lrzsz unzip jq psmisc

删除自带的工具

1

2

3

4

5

6

7

8# 彻底删除 snap 以及其配置文件

sudo apt remove -y snapd --purge

# 删除云功能和自动升级功能

sudo apt remove -y unattended-upgrades cloud-init

# 清理相关依赖

sudo apt install -f && sudo apt autoremove所有节点配置 APT 仓库源

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52# 备份原文件

sudo cp /etc/apt/sources.list.d/ubuntu.sources{,.bak}

# 切换到 root 用户

sudo -i

# 更改源文件配置

cat > /etc/apt/sources.list.d/ubuntu.sources <<EOF

Types: deb

URIs: https://mirrors.tuna.tsinghua.edu.cn/ubuntu

Suites: noble noble-updates noble-backports

Components: main restricted universe multiverse

Signed-By: /usr/share/keyrings/ubuntu-archive-keyring.gpg

# 默认注释了源码镜像以提高 apt update 速度,如有需要可自行取消注释

# Types: deb-src

# URIs: https://mirrors.tuna.tsinghua.edu.cn/ubuntu

# Suites: noble noble-updates noble-backports

# Components: main restricted universe multiverse

# Signed-By: /usr/share/keyrings/ubuntu-archive-keyring.gpg

# 以下安全更新软件源包含了官方源与镜像站配置,如有需要可自行修改注释切换

Types: deb

URIs: http://security.ubuntu.com/ubuntu/

Suites: noble-security

Components: main restricted universe multiverse

Signed-By: /usr/share/keyrings/ubuntu-archive-keyring.gpg

# Types: deb-src

# URIs: http://security.ubuntu.com/ubuntu/

# Suites: noble-security

# Components: main restricted universe multiverse

# Signed-By: /usr/share/keyrings/ubuntu-archive-keyring.gpg

# 预发布软件源,不建议启用

# Types: deb

# URIs: https://mirrors.tuna.tsinghua.edu.cn/ubuntu

# Suites: noble-proposed

# Components: main restricted universe multiverse

# Signed-By: /usr/share/keyrings/ubuntu-archive-keyring.gpg

# # Types: deb-src

# # URIs: https://mirrors.tuna.tsinghua.edu.cn/ubuntu

# # Suites: noble-proposed

# # Components: main restricted universe multiverse

# # Signed-By: /usr/share/keyrings/ubuntu-archive-keyring.gpg

EOF

# 更新系统

sudo apt clean

sudo apt update && sudo apt upgrade -y所有节点关闭防火墙,以及交换分区

1

2

3

4

5systemctl disable --now ufw

# 关闭 swap 分区以及注释 swap 挂载项

swapoff -a && sysctl -w vm.swappiness=0

sed -ri 's/.*swap.*/#&/' /etc/fstab所有节点配置 limits 限制

1

2

3

4

5

6

7

8

9

10# 临时设置 limits

ulimit -SHn 65535

# 永久设置 limits

sed -i '/^# End/i\* soft nofile 655350' /etc/security/limits.conf

sed -i '/^# End/i\* hard nofile 131072' /etc/security/limits.conf

sed -i '/^# End/i\* soft nproc 655350' /etc/security/limits.conf

sed -i '/^# End/i\* hard nproc 655350' /etc/security/limits.conf

sed -i '/^# End/i\* soft memlock unlimited' /etc/security/limits.conf

sed -i '/^# End/i\* hard memlock unlimited' /etc/security/limits.conf所有节点配置 k8s 集群中必须的内核参数。

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30cat > /etc/sysctl.d/k8s.conf <<EOF

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

net.ipv4.conf.all.route_localnet = 1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

# 应用配置

sysctl --system所有节点配置时间同步策略

1

2

3

4

5

6

7

8

9# 配置NTP同步地址为`10.0.88.118`

sudo sed -i "s@^#NTP=.*@NTP=10.0.88.118@" /etc/systemd/timesyncd.conf

# 设置时区以及重启服务

sudo timedatectl set-timezone Asia/Shanghai

sudo timedatectl set-ntp off

sudo timedatectl set-ntp on

sudo systemctl daemon-reload

sudo systemctl restart systemd-timesyncd开启 root 用户

1

2

3

4sudo sed -i "s@^#PermitRootLogin.*@PermitRootLogin yes@" /etc/ssh/sshd_config

sudo systemctl restart ssh

echo 'root:xxxxxxx' | change在 k8smaster01 节点配置免密登录其他节点

1

2

3

4

5

6

7# 创建 ssh 免密登录秘钥

ssh-keygen -t rsa

# 拷贝公钥到其他节点

for i in k8smaster01 k8smaster02 k8smaster03 k8snode01 k8snode02 k8snode03 k8snode04 k8snode05;do

ssh-copy-id -i ~/.ssh/id_rsa.pub root@$i;

done优化shell登录的欢迎信息

1

2

3

4

5cd /etc/update-motd.d/

chmod -x *

# 仅显示系统状态信息

chmod +x 00-header自定义配置文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15cat > /etc/profile.d/custom_profile.sh << EOF

HISTSIZE=10000

HISTTIMEFORMAT="%F %T \$(whoami) "

alias l='ls -AFhlt --color=auto'

alias lh='l | head'

alias ll='ls -l --color=auto'

alias ls='ls --color=auto'

alias vi=vim

GREP_OPTIONS="--color=auto"

alias grep='grep --color'

alias egrep='egrep --color'

alias fgrep='fgrep --color'

EOF修改 PS1

1

[ -z "$(grep ^PS1 ~/.bashrc)" ] && echo "PS1='\${debian_chroot:+(\$debian_chroot)}\\[\\e[1;32m\\]\\u@\\h\\[\\033[00m\\]:\\[\\033[01;34m\\]\\w\\[\\033[00m\\]\\$ '" >> ~/.bashrc

修改 history 显示

1

[ -z "$(grep history-timestamp ~/.bashrc)" ] && echo "PROMPT_COMMAND='{ msg=\$(history 1 | { read x y; echo \$y; });user=\$(whoami); echo \$(date \"+%Y-%m-%d %H:%M:%S\"):\$user:\`pwd\`/:\$msg ---- \$(who am i); } >> /tmp/\`hostname\`.\`whoami\`.history-timestamp'" >> ~/.bashrc

所有节点升级系统软件

1

apt update -y && apt upgrade -y

配置 IPVS

所有节点安装配置 ipvsadm 软件,新版本的 kube-proxy 默认支持的代理模式为 ipvs 模式,性能比 iptables 要强,如果服务器未配置安装 ipvs,将转换为 iptables 模式。

1

apt install -y ipvsadm ipset sysstat conntrack libseccomp-dev

所有节点配置 ipvs 模块(在内核 4.19版本的 nf_conntrack_ipv4 已经改为 nf_conntrack)。

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28cat > /etc/modules-load.d/ipvs.conf <<EOF

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

systemctl enable --now systemd-modules-load.service

# 检查模块是否加载

lsmod | grep -e ip_vs -e nf_conntrack

基本组件安装

这里主要安装的是集群中用到的各种组件,比如 Docker-ce、Kubernetes 各组件等。

安装 Containerd 作为 Runtime

所有节点安装 Docker-ce 以及 Containerd

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20# 卸载旧的的 docker

for pkg in docker.io docker-doc docker-compose docker-compose-v2 podman-docker containerd runc; do sudo apt-get remove $pkg; done

# 配置 Docker apt 仓库

sudo apt-get update

sudo apt-get install ca-certificates curl

sudo install -m 0755 -d /etc/apt/keyrings

sudo wget https://download.docker.com/linux/ubuntu/gpg && sudo mv gpg /etc/apt/keyrings/docker.asc

sudo chmod a+r /etc/apt/keyrings/docker.asc

# Add the repository to Apt sources:

echo \

"deb [arch=$(dpkg --print-architecture) signed-by=/etc/apt/keyrings/docker.asc] https://download.docker.com/linux/ubuntu \

$(. /etc/os-release && echo "$VERSION_CODENAME") stable" | \

sudo tee /etc/apt/sources.list.d/docker.list > /dev/null

sudo apt-get update

# 安装指定版本 Docker

sudo apt-get install docker-ce docker-ce-cli containerd.io docker-buildx-plugin docker-compose-plugin所有节点配置 Containerd 所需的模块:

1

2

3

4

5

6

7cat << EOF | sudo tee /etc/modules-load.d/containerd.conf

overlay

br_netfilter

EOF

modprobe -- overlay

modprobe -- br_netfilter所有节点配置 Containerd 的配置文件

1

2

3

4

5

6

7

8

9

10

11

12

13# 创建配置文件目录

mkdir -p /etc/containerd

# 生成默认配置文件

containerd config default | tee /etc/containerd/config.toml

# 修改 SystemdCgroup 配置

sed -i "s#SystemdCgroup\ \=\ false#SystemdCgroup\ \=\ true#g" /etc/containerd/config.toml

cat /etc/containerd/config.toml | grep SystemdCgroup

# 修改 pause 镜像

sed -i "s#registry.k8s.io#registry.cn-hangzhou.aliyuncs.com/google_containers#g" /etc/containerd/config.toml

cat /etc/containerd/config.toml | grep sandbox_image所有节点启动Containerd,并配置开机自启动

1

2systemctl daemon-reload

systemctl enable --now containerd所有节点安装配置 crictl 客户端

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16# 下载 crictl

wget https://github.com/kubernetes-sigs/cri-tools/releases/download/v1.27.0/crictl-v1.27.0-linux-amd64.tar.gz

# 安装 crictl

tar xf crictl-v*-linux-amd64.tar.gz -C /usr/bin/

# 生成 crictl 配置文件

cat > /etc/crictl.yaml <<EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF

# 测试 crictl

crictl info

安装 Nginx

注意,所有节点都需要安装 Nginx,并配置代理

安装编译环境

1

apt install -y gcc make

编译 Nginx

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17# 下载解压nginx二进制文件

wget http://nginx.org/download/nginx-1.25.1.tar.gz

tar xvf nginx-*.tar.gz

cd nginx-*

# 进行编译

./configure \

--prefix=/usr/local/nginx \

--user=www \

--group=www \

--with-stream \

--without-http \

--without-http_uwsgi_module \

--without-http_scgi_module \

--without-http_fastcgi_module

make && make install创建 Nginx 配置文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32mkdir -p /usr/local/nginx/conf/vhost

# 修改 nginx 配置文件,添加 stream 模块(因为编译参数加了` --without-http`,所以需要移除 http 模块,否则 `nginx -t` 会报错)

cat > /usr/local/nginx/conf/nginx.conf <<EOF

user www www;

worker_processes 1;

events {

worker_connections 2048;

}

stream {

log_format basic '\$remote_addr [\$time_local] '

'\$protocol \$status \$bytes_sent \$bytes_received '

'\$session_time';

access_log /usr/local/nginx/logs/stream-access.log basic buffer=32k;

include /usr/local/nginx/conf/vhost/*.stream;

upstream backend {

hash \$remote_addr consistent;

server 172.31.100.11:6443 max_fails=3 fail_timeout=30s;

server 172.31.100.12:6443 max_fails=3 fail_timeout=30s;

server 172.31.100.13:6443 max_fails=3 fail_timeout=30s;

}

server {

listen 127.0.0.1:8443;

proxy_connect_timeout 1s;

proxy_pass backend;

}

}

EOF创建 Nginx 服务管理文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23cat > /etc/systemd/system/nginx.service <<EOF

[Unit]

Description=kube-apiserver nginx proxy

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=forking

User=www

Group=www

ExecStartPre=/usr/local/nginx/sbin/nginx -c /usr/local/nginx/conf/nginx.conf -p /usr/local/nginx -t

ExecStart=/usr/local/nginx/sbin/nginx -c /usr/local/nginx/conf/nginx.conf -p /usr/local/nginx

ExecReload=/usr/local/nginx/sbin/nginx -c /usr/local/nginx/conf/nginx.conf -p /usr/local/nginx -s reload

PrivateTmp=true

Restart=always

RestartSec=5

StartLimitInterval=0

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF创建 Nginx 服务运行用户与修改目录权限

1

2

3

4useradd www -s /sbin/nologin -M

chown -R www.www /usr/local/nginx

chown -R root.root /usr/local/nginx/sbin/nginx

chmod +s /usr/local/nginx/sbin/nginx设置开机自启

1

2

3systemctl enable --now nginx

systemctl restart nginx

systemctl status nginx

部署 Etcd 集群

注意: 以下操作未做特别声明时均在

k8s-master01节点操作

下载 etcd 二进制包

1

wget https://github.com/etcd-io/etcd/releases/download/v3.5.14/etcd-v3.5.14-linux-amd64.tar.gz

解压 etcd 二进制包

1

tar zxvf etcd-v3.5.14-linux-amd64.tar.gz

安装 etcd 二进制文件

1

2mkdir -p /usr/local/etcd/{bin,cfg,ssl}

cp etcd-v3.5.14-linux-amd64/{etcd,etcdctl} /usr/local/etcd/bin/下载安装 cfssl 工具

1

2

3

4

5

6# 下载工具

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.4/cfssljson_1.6.4_linux_amd64 -O /usr/local/bin/cfssljson

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.4/cfssl_1.6.4_linux_amd64 -O /usr/local/bin/cfssl

# 添加执行权限

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson创建 etcd 相关证书

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80mkdir pki && cd pki

# 创建 CA 配置文件

cat > ca-config.json<<EOF

{

"signing": {

"default": {

"expiry": "876000h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "876000h"

}

}

}

}

EOF

# 创建 CSR 文件

cat > etcd-ca-csr.json <<EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "etcd",

"OU": "Etcd Security"

}

],

"ca": {

"expiry": "876000h"

}

}

EOF

cat > etcd-csr.json <<EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "etcd",

"OU": "Etcd Security"

}

]

}

EOF

# 生成 etcd ca 证书和 key

cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /usr/local/etcd/ssl/etcd-ca

# 生成 etcd 证书文件

cfssl gencert \

-ca=/usr/local/etcd/ssl/etcd-ca.pem \

-ca-key=/usr/local/etcd/ssl/etcd-ca-key.pem \

-config=ca-config.json \

-hostname=127.0.0.1,\

172.31.100.11,\

172.31.100.12,\

172.31.100.13 \

-profile=kubernetes \

etcd-csr.json | cfssljson -bare /usr/local/etcd/ssl/etcd注意,-hostname 需要把所有的 etcd 节点地址都填进去,也可以多填几个预留地址,方便后期 etcd 集群扩容。

创建 ETCD 配置文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48cat > /usr/local/etcd/cfg/etcd.config.yml << EOF

name: 'k8smaster01'

data-dir: /data/etcd

wal-dir: /data/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://172.31.100.11:2380'

listen-client-urls: 'https://172.31.100.11:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://172.31.100.11:2380'

advertise-client-urls: 'https://172.31.100.11:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8smaster01=https://172.31.100.11:2380,k8smaster02=https://172.31.100.12:2380,k8smaster03=https://172.31.100.13:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/usr/local/etcd/ssl/etcd.pem'

key-file: '/usr/local/etcd/ssl/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/usr/local/etcd/ssl/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/usr/local/etcd/ssl/etcd.pem'

key-file: '/usr/local/etcd/ssl/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/usr/local/etcd/ssl/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF创建 ETCD 服务管理文件(所有节点配置一样)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18cat > /usr/lib/systemd/system/etcd.service << EOF

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

ExecStart=/usr/local/etcd/bin/etcd --config-file=/usr/local/etcd/cfg/etcd.config.yml

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

Alias=etcd3.service

EOF拷贝 etcd 相关文件到其他 ETCD 节点

1

2

3

4for i in k8smaster02 k8smaster03;do

sudo scp -r /usr/local/etcd root@$i:/usr/local/etcd;

sudo scp /usr/lib/systemd/system/etcd.service root@$i:/usr/lib/systemd/system/etcd.service;

done修改其他节点的配置文件,需要修改的地方如下:

- name: 节点名称,集群中必须唯一

- listen-peer-urls: 修改为当前节点的IP地址

- listen-client-urls: 修改为当前节点的IP地址

- initial-advertise-peer-urls: 修改为当前节点的IP地址

- advertise-client-urls: 修改为当前节点的IP地址

所有节点启动 Etcd 并配置开机启动

1

systemctl enable --now etcd

检查 etcd 集群状态

1

2

3

4

5

6

7

8export ETCDCTL_API=3

/usr/local/etcd/bin/etcdctl \

--cacert=/usr/local/etcd/ssl/etcd-ca.pem \

--cert=/usr/local/etcd/ssl/etcd.pem \

--key=/usr/local/etcd/ssl/etcd-key.pem \

--endpoints="https://172.31.100.11:2379,\

https://172.31.100.12:2379,\

https://172.31.100.13:2379" endpoint status --write-out=table注意:如果启动节点启动失败,需要清除启动信息,然后再次启动, 参考文章 etcd集群节点挂掉后恢复步骤

- 具体操作如下:

1

rm -rf /var/lib/etcd/*

部署 k8s 集群

部署 Master 组件

注意: 以下操作未做特别声明时均在

k8smaster01节点操作

下载 k8s 二进制安装包

1

wget https://dl.k8s.io/v1.27.8/kubernetes-server-linux-amd64.tar.gz

解压 kubernetes 安装包

1

tar zxvf kubernetes-server-linux-amd64.tar.gz

安装 kubernetes 二进制文件

1

2

3mkdir -p /usr/local/kubernetes/{bin,cfg,ssl,manifests}

cp kubernetes/server/bin/kube{-apiserver,-controller-manager,-scheduler,-proxy,let} /usr/local/kubernetes/bin/

cp kubernetes/server/bin/kubectl /usr/local/bin/

创建 Master 组件证书

注意: 以下操作未做特别声明时均在 k8smaster01 节点操作

创建 CA 证书

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49cd ~/pki

# 创建 CA 配置文件

cat > ca-config.json<<EOF

{

"signing": {

"default": {

"expiry": "876000h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "876000h"

}

}

}

}

EOF

# 创建 ca-csr.json 文件

cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes-manual"

}

],

"ca": {

"expiry": "876000h"

}

}

EOF

# 创建 CA 证书以及 Key

cfssl gencert -initca ca-csr.json | cfssljson -bare /usr/local/kubernetes/ssl/ca创建 APIServer 证书

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41# 设置 CLB 负载均衡内网地址环境变量,后面生成证书时直接使用环境变量

export CLB_ADDRESS="127.0.0.1"

export CLB_PORT="8443"

# 创建 apiserver-csr.json 文件

cat > apiserver-csr.json <<EOF

{

"CN": "kube-apiserver",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes-manual"

}

]

}

EOF

# 创建 apiserver 证书

cfssl gencert \

-ca=/usr/local/kubernetes/ssl/ca.pem \

-ca-key=/usr/local/kubernetes/ssl/ca-key.pem \

-config=ca-config.json \

-hostname=10.96.0.1,\

127.0.0.1,\

kubernetes,\

kubernetes.default,\

kubernetes.default.svc,\

kubernetes.default.svc.cluster,\

kubernetes.default.svc.cluster.local,\

172.31.100.11,\

172.31.100.12,\

172.31.100.13 \

-profile=kubernetes \

apiserver-csr.json | cfssljson -bare /usr/local/kubernetes/ssl/apiserver生成 apiserver 的聚合证书。(Requestheader-client-xxx requestheader-allowwd-xxx:aggerator)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32# 创建 front-proxy-ca-csr.json 文件

cat > front-proxy-ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

}

}

EOF

# 创建 front-proxy-client-csr.json 文件

cat > front-proxy-client-csr.json <<EOF

{

"CN": "front-proxy-client",

"key": {

"algo": "rsa",

"size": 2048

}

}

EOF

# 创建 Apiserver 聚合 CA

cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /usr/local/kubernetes/ssl/front-proxy-ca

# 创建 Apiserver 聚合证书

cfssl gencert \

-ca=/usr/local/kubernetes/ssl/front-proxy-ca.pem \

-ca-key=/usr/local/kubernetes/ssl/front-proxy-ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

front-proxy-client-csr.json | cfssljson -bare /usr/local/kubernetes/ssl/front-proxy-client生成 controller-manager 证书

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45# 创建 manager-csr.json 文件

cat > manager-csr.json <<EOF

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-controller-manager",

"OU": "Kubernetes-manual"

}

]

}

EOF

# 创建 controller-manager 证书

cfssl gencert \

-ca=/usr/local/kubernetes/ssl/ca.pem \

-ca-key=/usr/local/kubernetes/ssl/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

manager-csr.json | cfssljson -bare /usr/local/kubernetes/ssl/controller-manager

# 创建 controller-manager 组件的 kubeconfig 文件

kubectl config set-cluster kubernetes \

--certificate-authority=/usr/local/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://${CLB_ADDRESS}:${CLB_PORT} \

--kubeconfig=/usr/local/kubernetes/cfg/controller-manager.kubeconfig && \

kubectl config set-credentials system:kube-controller-manager \

--client-certificate=/usr/local/kubernetes/ssl/controller-manager.pem \

--client-key=/usr/local/kubernetes/ssl/controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=/usr/local/kubernetes/cfg/controller-manager.kubeconfig && \

kubectl config set-context system:kube-controller-manager@kubernetes \

--cluster=kubernetes \

--user=system:kube-controller-manager \

--kubeconfig=/usr/local/kubernetes/cfg/controller-manager.kubeconfig && \

kubectl config use-context system:kube-controller-manager@kubernetes \

--kubeconfig=/usr/local/kubernetes/cfg/controller-manager.kubeconfig生成 scheduler 证书文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45# 创建 scheduler-csr.json 文件

cat > scheduler-csr.json <<EOF

{

"CN": "system:kube-scheduler",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-scheduler",

"OU": "Kubernetes-manual"

}

]

}

EOF

# 创建 scheduler 证书

cfssl gencert \

-ca=/usr/local/kubernetes/ssl/ca.pem \

-ca-key=/usr/local/kubernetes/ssl/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

scheduler-csr.json | cfssljson -bare /usr/local/kubernetes/ssl/scheduler

# 创建 scheduler 组件的 kubeconfig 文件

kubectl config set-cluster kubernetes \

--certificate-authority=/usr/local/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://${CLB_ADDRESS}:${CLB_PORT} \

--kubeconfig=/usr/local/kubernetes/cfg/scheduler.kubeconfig && \

kubectl config set-credentials system:kube-scheduler \

--client-certificate=/usr/local/kubernetes/ssl/scheduler.pem \

--client-key=/usr/local/kubernetes/ssl/scheduler-key.pem \

--embed-certs=true \

--kubeconfig=/usr/local/kubernetes/cfg/scheduler.kubeconfig && \

kubectl config set-context system:kube-scheduler@kubernetes \

--cluster=kubernetes \

--user=system:kube-scheduler \

--kubeconfig=/usr/local/kubernetes/cfg/scheduler.kubeconfig && \

kubectl config use-context system:kube-scheduler@kubernetes \

--kubeconfig=/usr/local/kubernetes/cfg/scheduler.kubeconfig生成集群管理员 admin 的证书

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45# 创建 admin-csr.json 文件

cat > admin-csr.json <<EOF

{

"CN": "admin",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:masters",

"OU": "Kubernetes-manual"

}

]

}

EOF

# 创建 admin 证书

cfssl gencert \

-ca=/usr/local/kubernetes/ssl/ca.pem \

-ca-key=/usr/local/kubernetes/ssl/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

admin-csr.json | cfssljson -bare /usr/local/kubernetes/ssl/admin

# 创建 admin 管理员的 kubeconfig 文件

kubectl config set-cluster kubernetes \

--certificate-authority=/usr/local/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://${CLB_ADDRESS}:${CLB_PORT} \

--kubeconfig=/usr/local/kubernetes/cfg/admin.kubeconfig && \

kubectl config set-credentials kubernetes-admin \

--client-certificate=/usr/local/kubernetes/ssl/admin.pem \

--client-key=/usr/local/kubernetes/ssl/admin-key.pem \

--embed-certs=true \

--kubeconfig=/usr/local/kubernetes/cfg/admin.kubeconfig && \

kubectl config set-context kubernetes-admin@kubernetes \

--cluster=kubernetes \

--user=kubernetes-admin \

--kubeconfig=/usr/local/kubernetes/cfg/admin.kubeconfig && \

kubectl config use-context kubernetes-admin@kubernetes \

--kubeconfig=/usr/local/kubernetes/cfg/admin.kubeconfig生成 ServiceAccount Key

1

2

3openssl genrsa -out /usr/local/kubernetes/ssl/sa.key 2048

openssl rsa -in /usr/local/kubernetes/ssl/sa.key -pubout -out /usr/local/kubernetes/ssl/sa.pub

配置 Master 组件

注意: 以下操作未做特别声明时均在 k8s-master01 节点操作

创建 Apiserver 服务文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47cat > /usr/lib/systemd/system/kube-apiserver.service <<EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/kubernetes/bin/kube-apiserver \\

--v=2 \\

--allow-privileged=true \\

--bind-address=0.0.0.0 \\

--secure-port=6443 \\

--advertise-address=172.31.100.11 \\

--service-cluster-ip-range=10.96.0.0/16 \\

--service-node-port-range=30000-32767 \\

--etcd-servers=https://172.31.100.11:2379,https://172.31.100.12:2379,https://172.31.100.13:2379 \\

--etcd-cafile=/usr/local/etcd/ssl/etcd-ca.pem \\

--etcd-certfile=/usr/local/etcd/ssl/etcd.pem \\

--etcd-keyfile=/usr/local/etcd/ssl/etcd-key.pem \\

--client-ca-file=/usr/local/kubernetes/ssl/ca.pem \\

--tls-cert-file=/usr/local/kubernetes/ssl/apiserver.pem \\

--tls-private-key-file=/usr/local/kubernetes/ssl/apiserver-key.pem \\

--kubelet-client-certificate=/usr/local/kubernetes/ssl/apiserver.pem \\

--kubelet-client-key=/usr/local/kubernetes/ssl/apiserver-key.pem \\

--service-account-key-file=/usr/local/kubernetes/ssl/sa.pub \\

--service-account-signing-key-file=/usr/local/kubernetes/ssl/sa.key \\

--service-account-issuer=https://kubernetes.default.svc.cluster.local \\

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \\

--authorization-mode=Node,RBAC \\

--enable-bootstrap-token-auth=true \\

--requestheader-client-ca-file=/usr/local/kubernetes/ssl/front-proxy-ca.pem \\

--proxy-client-cert-file=/usr/local/kubernetes/ssl/front-proxy-client.pem \\

--proxy-client-key-file=/usr/local/kubernetes/ssl/front-proxy-client-key.pem \\

--requestheader-allowed-names=aggregator \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-extra-headers-prefix=X-Remote-Extra- \\

--requestheader-username-headers=X-Remote-User \\

--enable-aggregator-routing=true

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF创建 Controller-manager 服务启动文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33cat >/usr/lib/systemd/system/kube-controller-manager.service <<EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/kubernetes/bin/kube-controller-manager \\

--v=2 \\

--bind-address=0.0.0.0 \\

--root-ca-file=/usr/local/kubernetes/ssl/ca.pem \\

--cluster-signing-cert-file=/usr/local/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/usr/local/kubernetes/ssl/ca-key.pem \\

--service-account-private-key-file=/usr/local/kubernetes/ssl/sa.key \\

--authentication-kubeconfig=/usr/local/kubernetes/cfg/controller-manager.kubeconfig \\

--authorization-kubeconfig=/usr/local/kubernetes/cfg/controller-manager.kubeconfig \\

--kubeconfig=/usr/local/kubernetes/cfg/controller-manager.kubeconfig \\

--leader-elect=true \\

--use-service-account-credentials=true \\

--node-monitor-grace-period=40s \\

--node-monitor-period=5s \\

--controllers=*,bootstrapsigner,tokencleaner \\

--allocate-node-cidrs=true \\

--cluster-cidr=192.168.0.0/16 \\

--requestheader-client-ca-file=/usr/local/kubernetes/ssl/front-proxy-ca.pem \\

--node-cidr-mask-size=24

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF创建 Scheduler 服务启动文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21cat >/usr/lib/systemd/system/kube-scheduler.service <<EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/kubernetes/bin/kube-scheduler \\

--v=2 \\

--bind-address=0.0.0.0 \\

--leader-elect=true \\

--authentication-kubeconfig=/usr/local/kubernetes/cfg/scheduler.kubeconfig \\

--authorization-kubeconfig=/usr/local/kubernetes/cfg/scheduler.kubeconfig \\

--kubeconfig=/usr/local/kubernetes/cfg/scheduler.kubeconfig

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF拷贝文件到其他 Master 节点

1

2

3

4for i in k8smaster02 k8smaster03;do

scp /usr/lib/systemd/system/kube-{apiserver,controller-manager,scheduler}.service root@$i:/usr/lib/systemd/system/;

scp -r /usr/local/kubernetes/ root@$i:/usr/local/kubernetes;

done修改

k8smaster02节点与k8smaster03节点kube-apiserver.service文件中的--advertise-address=172.31.100.11配置为自己的 IP 地址所有 master 节点启动以下服务

1

2systemctl daemon-reload

systemctl enable --now kube-apiserver kube-controller-manager kube-scheduler配置 kubectl 客户端工具,以下所有步骤只在安装了 kubectl 的节点执行。(kubectl 不一定要在 k8s 集群内,可以在任意一台可以访问 k8s 集群的服务器上安装 kubectl)

1

2

3

4

5

6

7

8

9

10

11

12# 创建 kubectl 配置文件

mkdir ~/.kube

cp /usr/local/kubernetes/cfg/admin.kubeconfig ~/.kube/config

# 配置kubectl子命令补全

apt install -y bash-completion

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

kubectl completion bash > ~/.kube/completion.bash.inc

echo "source ~/.kube/completion.bash.inc" >> ~/.bash_profile

source $HOME/.bash_profile测试 kubectl 命令

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

# kubectl cluster-info

Kubernetes master is running at https://127.0.0.1:8443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 11h注意:

10.96.0.0/16网段第一个可用的 IP 地址为10.96.0.1

部署 Worker 组件

配置 TLSBootstrap

注意: 以下操作未做特别声明时均在

k8s-master01节点操作

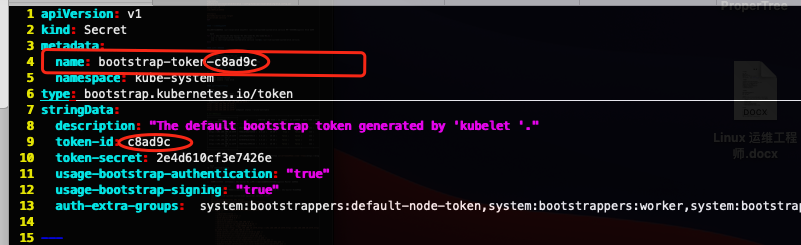

创建 bootstrap.secret.yaml 文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89cat > bootstrap.secret.yaml <<EOF

apiVersion: v1

kind: Secret

metadata:

name: bootstrap-token-c8ad9c

namespace: kube-system

type: bootstrap.kubernetes.io/token

stringData:

description: "The default bootstrap token generated by 'kubelet '."

token-id: c8ad9c

token-secret: 2e4d610cf3e7426e

usage-bootstrap-authentication: "true"

usage-bootstrap-signing: "true"

auth-extra-groups: system:bootstrappers:default-node-token,system:bootstrappers:worker,system:bootstrappers:ingress

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubelet-bootstrap

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node-bootstrapper

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-autoapprove-bootstrap

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:nodeclient

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-autoapprove-certificate-rotation

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:selfnodeclient

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:nodes

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

namespace: ""

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kube-apiserver

EOF创建 bootstrap RBAC 策略

1

kubectl create -f bootstrap.secret.yaml

部署 kubelet 组件

注意: 以下操作未做特别声明时均在

k8s-master01节点操作

创建 bootstrap-kubelet.kubeconfig 文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14kubectl config set-cluster kubernetes \

--certificate-authority=/usr/local/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://${CLB_ADDRESS}:${CLB_PORT} \

--kubeconfig=/usr/local/kubernetes/cfg/bootstrap-kubelet.kubeconfig && \

kubectl config set-credentials tls-bootstrap-token-user \

--token=c8ad9c.2e4d610cf3e7426e \

--kubeconfig=/usr/local/kubernetes/cfg/bootstrap-kubelet.kubeconfig && \

kubectl config set-context tls-bootstrap-token-user@kubernetes \

--cluster=kubernetes \

--user=tls-bootstrap-token-user \

--kubeconfig=/usr/local/kubernetes/cfg/bootstrap-kubelet.kubeconfig && \

kubectl config use-context tls-bootstrap-token-user@kubernetes \

--kubeconfig=/usr/local/kubernetes/cfg/bootstrap-kubelet.kubeconfig注意:如果要修改 bootstrap.secret.yaml 的 token-id 和 token-secret,需要保证下图红圈内的字符串一致的,并且位数是一样的。还要保证上个命令的 token:c8ad9c.2e4d610cf3e7426e 与你修改的字符串 要一致

创建应用目录以及拷贝二进制文件(这里使用 ssh 远程登录到 Worker 节点执行命令的方式创建)

1

2

3

4for i in k8snode01 k8snode02 k8snode03 k8snode04 k8snode05;do

ssh root@$i "mkdir -p /usr/local/kubernetes/{bin,cfg,ssl,manifests}";

scp ~/kubernetes/server/bin/{kubelet,kube-proxy} root@$i:/usr/local/kubernetes/bin/;

done拷贝证书以及配置文件到所有 Worker 节点

1

2

3

4for i in k8smaster02 k8smaster03 k8snode01 k8snode02 k8snode03 k8snode04 k8snode05;do

scp /usr/local/kubernetes/ssl/{ca.pem,ca-key.pem,front-proxy-ca.pem} root@$i:/usr/local/kubernetes/ssl/;

scp /usr/local/kubernetes/cfg/bootstrap-kubelet.kubeconfig root@$i:/usr/local/kubernetes/cfg/;

done在所有 Worker 节点执行以下命令创建 kubelet 服务启动文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17cat >/usr/lib/systemd/system/kubelet.service <<EOF

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=docker.service

Requires=docker.service

[Service]

ExecStart=/usr/local/kubernetes/bin/kubelet

Restart=always

StartLimitInterval=0

RestartSec=10

[Install]

WantedBy=multi-user.target

EOF在所有 Worker 节点执行以下命令配置 kubelet service 的配置文件

1

2

3

4

5

6

7

8

9

10mkdir -p /etc/systemd/system/kubelet.service.d;

cat >/etc/systemd/system/kubelet.service.d/10-kubelet.conf <<EOF

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/usr/local/kubernetes/cfg/bootstrap-kubelet.kubeconfig --kubeconfig=/usr/local/kubernetes/cfg/kubelet.kubeconfig"

Environment="KUBELET_SYSTEM_ARGS=--container-runtime-endpoint=unix:///run/containerd/containerd.sock"

Environment="KUBELET_CONFIG_ARGS=--config=/usr/local/kubernetes/cfg/kubelet-conf.yml"

Environment="KUBELET_EXTRA_ARGS="

ExecStart=

ExecStart=/usr/local/kubernetes/bin/kubelet \$KUBELET_KUBECONFIG_ARGS \$KUBELET_CONFIG_ARGS \$KUBELET_SYSTEM_ARGS \$KUBELET_EXTRA_ARGS

EOF注意上面的

--node-labels,如果是 master 节点,需要修改为node-role.kubernetes.io/master=''在所有 Worker 节点执行以下命令创建 kubelet 的配置文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72cat >/usr/local/kubernetes/cfg/kubelet-conf.yml <<EOF

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /usr/local/kubernetes/ssl/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

cgroupDriver: systemd

cgroupsPerQOS: true

clusterDNS:

- 10.96.0.10

clusterDomain: cluster.local

containerLogMaxFiles: 5

containerLogMaxSize: 10Mi

contentType: application/vnd.kubernetes.protobuf

cpuCFSQuota: true

cpuManagerPolicy: none

cpuManagerReconcilePeriod: 10s

enableControllerAttachDetach: true

enableDebuggingHandlers: true

enforceNodeAllocatable:

- pods

eventBurst: 10

eventRecordQPS: 5

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

evictionPressureTransitionPeriod: 5m0s

failSwapOn: true

fileCheckFrequency: 20s

hairpinMode: promiscuous-bridge

healthzBindAddress: 127.0.0.1

healthzPort: 10248

httpCheckFrequency: 20s

imageGCHighThresholdPercent: 85

imageGCLowThresholdPercent: 80

imageMinimumGCAge: 2m0s

iptablesDropBit: 15

iptablesMasqueradeBit: 14

kubeAPIBurst: 10

kubeAPIQPS: 5

makeIPTablesUtilChains: true

maxOpenFiles: 1000000

maxPods: 110

nodeStatusUpdateFrequency: 10s

oomScoreAdj: -999

podPidsLimit: -1

registryBurst: 10

registryPullQPS: 5

resolvConf: /etc/resolv.conf

rotateCertificates: true

runtimeRequestTimeout: 2m0s

serializeImagePulls: true

staticPodPath: /usr/local/kubernetes/manifests

streamingConnectionIdleTimeout: 4h0m0s

syncFrequency: 1m0s

volumeStatsAggPeriod: 1m0s

EOF在所有 Worker 节点执行以下命令启动 kubelet

1

systemctl daemon-reload && systemctl enable --now kubelet

在所有 Worker 节点执行以下命令查看 kubelet 服务启动状态

1

systemctl status kubelet.service

注意: 出现 Unable to update cni config: no networks found in /etc/cni/net.d 显示只有如下信息为正常

在

k8s-master01节点执行以下命令查看集群节点状态1

2

3

4

5

6

7# kubectl get nodes -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-master01 NotReady <none> 84s v1.26.5 192.168.1.51 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 containerd://1.6.21

k8s-master02 NotReady <none> 84s v1.26.5 192.168.1.52 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 containerd://1.6.21

k8s-master03 NotReady <none> 84s v1.26.5 192.168.1.53 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 containerd://1.6.21

k8s-node01 NotReady <none> 84s v1.26.5 192.168.1.54 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 containerd://1.6.21

k8s-node02 NotReady <none> 83s v1.26.5 192.168.1.55 <none> CentOS Linux 7 (Core) 4.19.12-1.el7.elrepo.x86_64 containerd://1.6.21

部署 kube-proxy

注意: 以下操作未做特别声明时均在

k8s-master01节点操作

创建 kube-proxy 组件的证书以及 kubeconfig 文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47cd ~/pki

# 创建 kube-proxy-csr.json 文件

cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-proxy",

"OU": "Kubernetes-manual"

}

]

}

EOF

# 创建 kube-proxy 证书

cfssl gencert \

-ca=/usr/local/kubernetes/ssl/ca.pem \

-ca-key=/usr/local/kubernetes/ssl/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

kube-proxy-csr.json | cfssljson -bare /usr/local/kubernetes/ssl/kube-proxy

# 创建 kube-proxy 组件的 kubeconfig 文件

kubectl config set-cluster kubernetes \

--certificate-authority=/usr/local/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://${CLB_ADDRESS}:${CLB_PORT} \

--kubeconfig=/usr/local/kubernetes/cfg/kube-proxy.kubeconfig && \

kubectl config set-credentials kube-proxy \

--client-certificate=/usr/local/kubernetes/ssl/kube-proxy.pem \

--client-key=/usr/local/kubernetes/ssl/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=/usr/local/kubernetes/cfg/kube-proxy.kubeconfig && \

kubectl config set-context kube-proxy@kubernetes \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=/usr/local/kubernetes/cfg/kube-proxy.kubeconfig && \

kubectl config use-context kube-proxy@kubernetes \

--kubeconfig=/usr/local/kubernetes/cfg/kube-proxy.kubeconfig创建 kube-proxy 服务启动文件

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17cat >/usr/lib/systemd/system/kube-proxy.service <<EOF

[Unit]

Description=Kubernetes Kube Proxy

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/kubernetes/bin/kube-proxy \\

--config=/usr/local/kubernetes/cfg/kube-proxy.conf \\

--v=2

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF创建 kube-proxy 配置文件,如果更改了集群 Pod 的网段,需要更改 clusterCIDR: 172.16.0.0/12 参数。

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38cat >/usr/local/kubernetes/cfg/kube-proxy.conf <<EOF

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

clientConnection:

acceptContentTypes: ""

burst: 10

contentType: application/vnd.kubernetes.protobuf

kubeconfig: /usr/local/kubernetes/cfg/kube-proxy.kubeconfig

qps: 5

clusterCIDR: 172.16.0.0/16

configSyncPeriod: 15m0s

conntrack:

max: null

maxPerCore: 32768

min: 131072

tcpCloseWaitTimeout: 1h0m0s

tcpEstablishedTimeout: 24h0m0s

enableProfiling: false

healthzBindAddress: 0.0.0.0:10256

hostnameOverride: ""

iptables:

masqueradeAll: false

masqueradeBit: 14

minSyncPeriod: 0s

syncPeriod: 30s

ipvs:

masqueradeAll: true

minSyncPeriod: 5s

scheduler: "rr"

syncPeriod: 30s

kind: KubeProxyConfiguration

metricsBindAddress: 127.0.0.1:10249

mode: "ipvs"

nodePortAddresses: null

oomScoreAdj: -999

portRange: ""

udpIdleTimeout: 250ms

EOF拷贝 kube-proxy 相关文件到其他节点

1

2

3

4for i in k8smaster02 k8smaster03 k8snode01 k8snode02 k8snode03 k8snode04 k8snode05;do

scp /usr/local/kubernetes/cfg/kube-proxy.{conf,kubeconfig} root@$i:/usr/local/kubernetes/cfg/;

scp /usr/lib/systemd/system/kube-proxy.service root@$i:/usr/lib/systemd/system/kube-proxy.service;

done所有节点启动 kube-proxy

1

systemctl daemon-reload && systemctl enable --now kube-proxy.service

kube-proxy 服务运行成功后,所有节点应该均可以访问

10.96.0.1:443端口

部署 k8s 插件

安装 calico

下载最新的 calico 资源清单

1

curl https://raw.githubusercontent.com/projectcalico/calico/v3.26.4/manifests/calico-etcd.yaml -O

修改 calico-etcd 配置,添加 ETCD 节点信息以及证书

1

2

3

4

5

6

7

8

9sed -i 's#etcd_endpoints: "http://<ETCD_IP>:<ETCD_PORT>"#etcd_endpoints: "https://172.31.100.11:2379,https://172.31.100.12:2379,https://172.31.100.13:2379"#g' calico-etcd.yaml

ETCD_CA=`cat /usr/local/etcd/ssl/etcd-ca.pem | base64 | tr -d '\n'`

ETCD_CERT=`cat /usr/local/etcd/ssl/etcd.pem | base64 | tr -d '\n'`

ETCD_KEY=`cat /usr/local/etcd/ssl/etcd-key.pem | base64 | tr -d '\n'`

sed -i "s@# etcd-key: null@etcd-key: ${ETCD_KEY}@g; s@# etcd-cert: null@etcd-cert: ${ETCD_CERT}@g; s@# etcd-ca: null@etcd-ca: ${ETCD_CA}@g" calico-etcd.yaml

sed -i 's#etcd_ca: ""#etcd_ca: "/calico-secrets/etcd-ca"#g; s#etcd_cert: ""#etcd_cert: "/calico-secrets/etcd-cert"#g; s#etcd_key: "" #etcd_key: "/calico-secrets/etcd-key" #g' calico-etcd.yaml修改 calico 中 CIDR 的网段为 Pods 网段,即 172.16.0.0/16

1

sed -i "s@# - name: CALICO_IPV4POOL_CIDR@- name: CALICO_IPV4POOL_CIDR@g; s@# value: \"192.168.0.0/16\"@ value: \"192.168.0.0/16\"@g" calico-etcd.yaml

添加节点亲和性,使 calico 不调度到边缘节点上(可选,如果不是边缘集群可以忽略这一步)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28...

template:

metadata:

labels:

k8s-app: calico-node

spec:

nodeSelector:

kubernetes.io/os: linux

hostNetwork: true

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: node-role.kubernetes.io/edge

operator: DoesNotExist

- key: node-role.kubernetes.io/agent

operator: DoesNotExist

tolerations:

# Make sure calico-node gets scheduled on all nodes.

- effect: NoSchedule

operator: Exists

# Mark the pod as a critical add-on for rescheduling.

- key: CriticalAddonsOnly

operator: Exists

- effect: NoExecute

operator: Exists

...在 k8s-sit-master01 节点执行以下命令安装 calico

1

kubectl create -f calico-etcd.yaml

查看集群calico pods 运行状态,系统 Pod 都在 kube-system 命名空间

1

2

3

4

5

6

7

8

9kubectl get pods -n kube-system

# 输出如下

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-97769f7c7-42snz 1/1 Running 0 2m47s

calico-node-b8hqx 1/1 Running 0 2m47s

calico-node-m5x7n 1/1 Running 0 2m47s

calico-node-pz5qn 1/1 Running 0 2m47s

calico-node-vp6wm 1/1 Running 0 2m47s

calico-node-wqgtn 1/1 Running 0 2m47s再次查看集群 node 状态

1

2

3

4

5

6

7

8# kubectl get node -n kube-system -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

calico-kube-controllers-84d6655649-xdkc8 1/1 Running 0 3m32s 172.31.100.14 k8s-node01 <none> <none>

calico-node-4d7rw 1/1 Running 0 3m32s 172.31.100.13 k8s-master03 <none> <none>

calico-node-m86qp 1/1 Running 0 3m32s 172.31.100.12 k8s-master02 <none> <none>

calico-node-ncrrz 1/1 Running 0 3m32s 172.31.100.15 k8s-node02 <none> <none>

calico-node-ph6gd 1/1 Running 0 3m32s 172.31.100.11 k8s-master01 <none> <none>

calico-node-qzwhr 1/1 Running 0 3m32s 172.31.100.14 k8s-node01 <none> <none>如果容器状态异常可以使用 kubectl describe 或者logs查看容器的日志.

安装 CoreDNS

安装最新版的 CoreDNS

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15# 下载源码

git clone https://github.com/coredns/deployment.git

# 直接部署

cd deployment/kubernetes

./deploy.sh -s -i 10.96.0.10 | kubectl apply -f -

serviceaccount/coredns created

clusterrole.rbac.authorization.k8s.io/system:coredns created

clusterrolebinding.rbac.authorization.k8s.io/system:coredns created

configmap/coredns created

deployment.apps/coredns created

service/kube-dns created

# 生成 coredns.yaml 文件

./deploy.sh -s -i 10.96.0.10 > coredns.yaml查看状态

1

2

3kubectl get pods -n kube-system -l k8s-app=kube-dns

NAME READY STATUS RESTARTS AGE

coredns-7bf4bd64bd-fn8wc 1/1 Running 0 107s

安装 Metrics Server

下载 metric-server 资源清单

1

wget https://github.com/kubernetes-sigs/metrics-server/releases/download/v0.6.4/components.yaml

修改 metrics-server 文件,主要修改内容如下:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44spec:

# 0. 添加节点亲和性,将 metrics 服务部署到非边缘节点

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: node-role.kubernetes.io/edge

operator: DoesNotExist

- key: node-role.kubernetes.io/agent

operator: DoesNotExist

containers:

- args:

- --cert-dir=/tmp

- --secure-port=4443

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --kubelet-use-node-status-port

- --metric-resolution=15s

- --kubelet-insecure-tls # 1. 添加以下内容

- --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem

- --requestheader-username-headers=X-Remote-User

- --requestheader-group-headers=X-Remote-Group

- --requestheader-extra-headers-prefix=X-Remote-Extra-

image: registry-changsha.vonebaas.com/kubernetes/metrics-server/metrics-server:v0.6.4 # 2. 修改镜像使用自己同步的阿里云镜像

...

ports:

- containerPort: 4443

name: https

protocol: TCP

...

volumeMounts:

- mountPath: /tmp

name: tmp-dir

- mountPath: /etc/kubernetes/pki # 3. 挂载卷到容器

name: ca-ssl

nodeSelector:

kubernetes.io/os: linux

...

volumes:

- emptyDir: {}

name: tmp-dir

- name: ca-ssl # 4. 挂载证书到卷

hostPath:

path: /usr/local/kubernetes/ssl使用 kubectl 安装 metrics Server

1

kubectl create -f components.yaml

查看 pod 运行状态

1

2

3kubectl get pods -n kube-system -l k8s-app=metrics-server

NAME READY STATUS RESTARTS AGE

metrics-server-69c977b6ff-6ncw4 1/1 Running 0 4m7s查看集群度量指标

1

2

3

4

5

6

7

8

9

10kubectl top pods -n kube-system

NAME CPU(cores) MEMORY(bytes)

calico-kube-controllers-97769f7c7-kv9d9 2m 12Mi

calico-node-ff66c 19m 45Mi

calico-node-hf46z 20m 45Mi

calico-node-x6nzb 22m 42Mi

calico-node-xff8p 19m 39Mi

calico-node-z9wdl 22m 42Mi

coredns-7bf4bd64bd-fn8wc 4m 13Mi

metrics-server-69c977b6ff-6ncw4 2m 16Mi

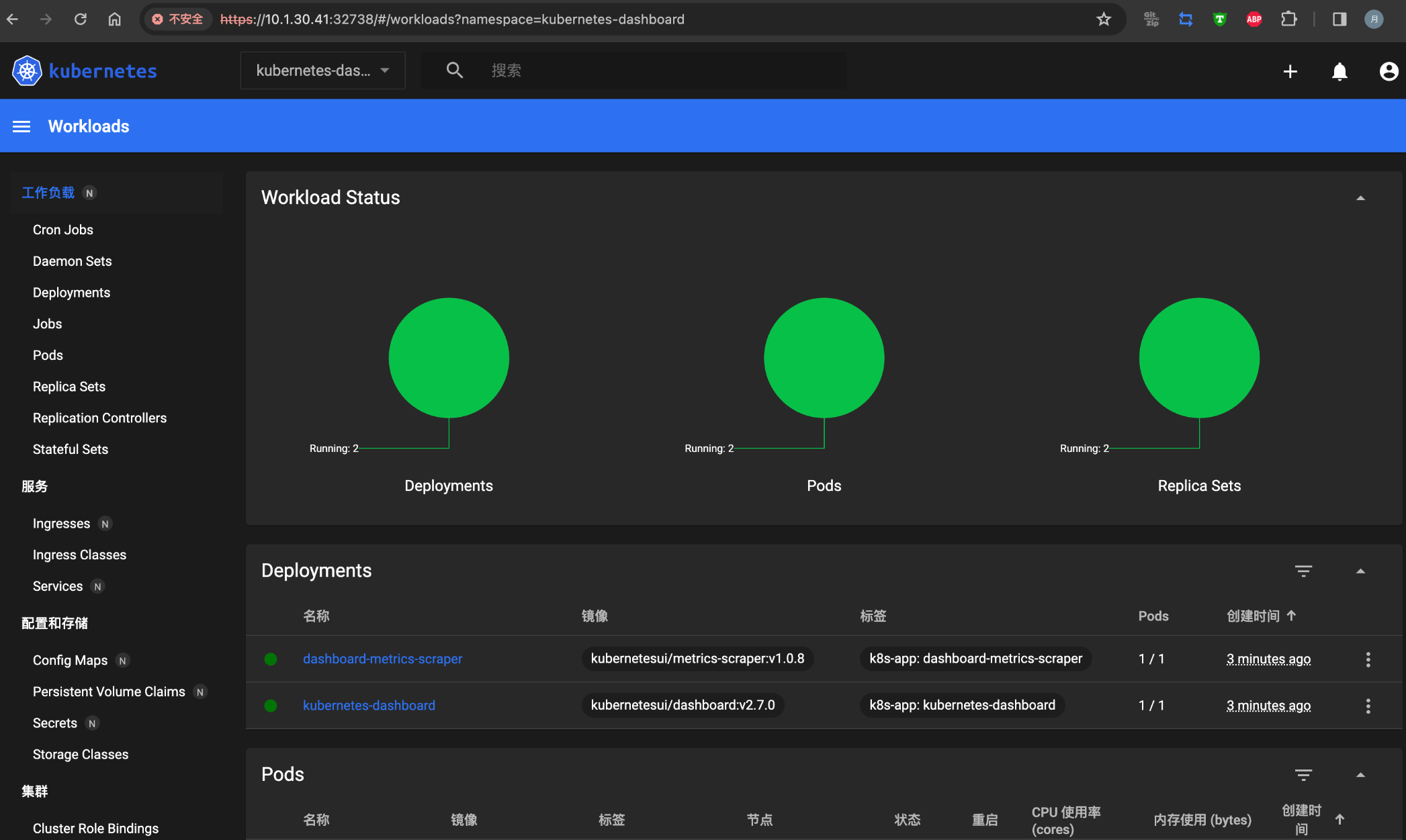

安装 Dashboard

Dashboard 官方 GitHub:

https://github.com/kubernetes/dashboard找到最新的安装文件,执行如下命令

1

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

查看 dashboard 容器状态

1

kubectl get pods -n kubernetes-dashboard

更改 Dashboard 的 svc,将 type: ClusterIP 更改为 type: NodePort,如果已经是 NodePort 则忽略此步骤

1

kubectl edit svc kubernetes-dashboard -n kubernetes-dashboard

由于使用的是自签名的证书,浏览器可能会打不开,需要在谷歌浏览器启动文件中加入启动参数,用于解决无法访问 Dashboard 的问题。启动参数如下

1

--test-type --ignore-certificate-errors

根据自己的实例端口号,通过任意安装了 kube-proxy 的宿主机IP或者 VIP 的 IP 加上端口即可访问 dashboard。查看端口的命令如下

1

kubectl get svc -n kubernetes-dashboard kubernetes-dashboard

创建管理员用户

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22cat <<EOF | kubectl apply -f - -n kube-system

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kube-system

EOF查看 token 的值

1

kubectl create token -n kube-system admin-user